Rime Labs speaks at Bay Area NLP

With GPT4 on everyone's mind, Rime Labs CEO leads discussion on the differences between large text models and large speech models

Yesterday, Rime CEO Lily Clifford presented an analysis of the exciting Large Language Model (LLM) space before a collection of dozens of leaders in the language-tech world. A video of the presentation and discussion, hosted by Rob Monarch, will be posted soon, but in the meantime here’s a short recap!

The differences between Text and Speech

Lily led the presentation with an in-depth run-down of the fundamental differences between text and speech. Calling on her background in Sociolinguistics and Machine Learning, she explored the vast and rich social information that is inherent to speech, and which is unavoidably lost in text, despite countless vain attempts to preserve it (like italics, alternate spellings, and novel punctuation).

Speech expresses the social background of the speaker (age, sex, dialect), their standpoint affect (sarcasm, sincerity, anger), and contrastive focus (‘John doesn’t like Mary’ versus ‘John doesn’t like Mary’ versus ‘John doesn’t like Mary’). For each of these, text generally fails to represent these distinctions, and because of this, LLMs that are trained on text will not be able to express this rich social information they encode.

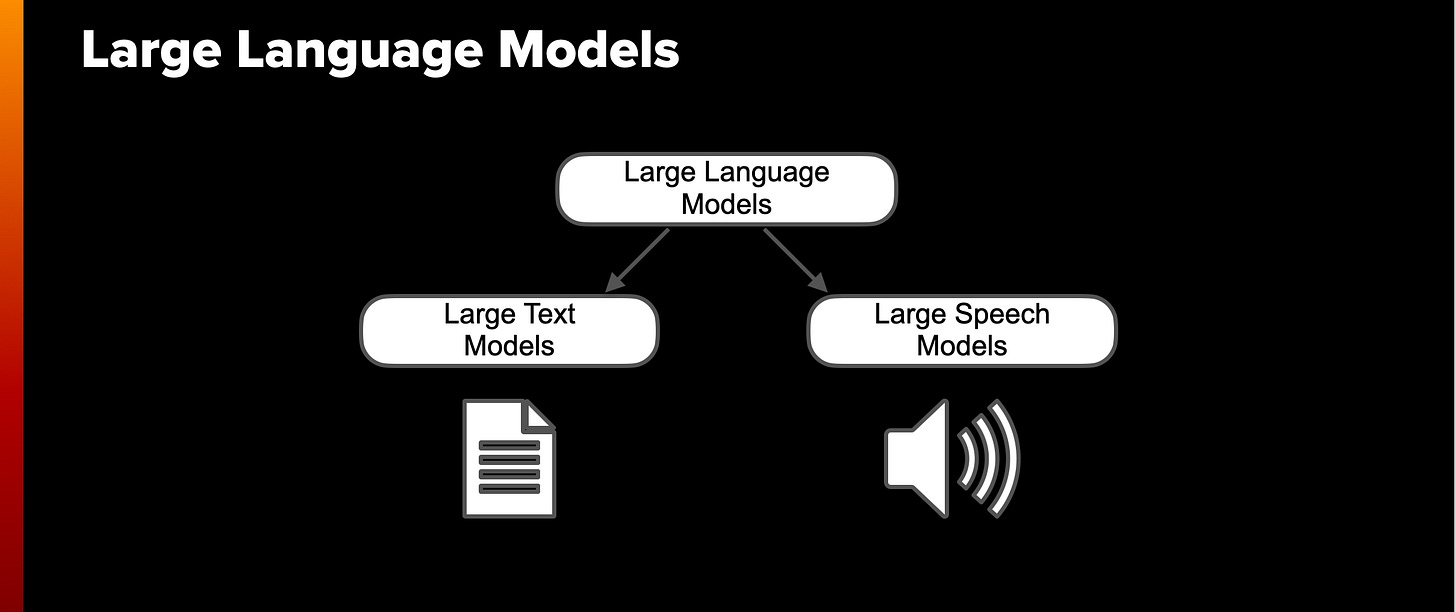

There’s no such thing as a Large Language Model

Lily then got into the meat of the presentation with the bold claim that what everyone has been calling Large Language Models should actually be called Large Text Models. LLMs are trained on massive amounts of simple text, and though they are undoubtedly extremely impressive, text is only a subcomponent of language. In light of the fundamental differences between text and speech, Lily proposed that we should adopt the analogous term Large Speech Models for capturing the social richness of spoken language.

The practical distinctions between text models and speech models are stark. Every day, millions of people are feeding the internet with more and more text on which text models can train. This text is by its very nature discrete and properly encoded. Speech on the other hand is a different matter. There is much less speech data on the internet, and what is there is often mixed with music, sound effects, and other speech, making it much more difficult to train on. In fact, the fact that text is relatively so easy to work with serves to explain the conflation of text models with language models as a whole.

Rime’s approach to Speech Models and TTS

Because speech can encode so much rich social information, speech models should take advantage of that to make the most immediately impactful and compelling products. At Rime, we’re guided by our expertise in the social components of language and are creating voice products for every context, where diversity of voices is never a limiting factor, and that are natural and conversational. An example of these principles in action can be heard in the clip below, created with a voice of a person that doesn’t exist, which rich social nuance, and conversational naturalness.

We will post a video recording of this talk as soon as it’s made public!